Getting an agent running has always meant solving a long list of infrastructure problems before you can test whether the agent itself is any good. You wire up frameworks, storage, authentication, and deployment pipelines, and by the time your agent handles its first real task, you’ve spent days on infrastructure instead of agent logic.

We built AgentCore from the ground up to help developers focus on building agent logic instead of backend plumbing, working with frameworks and models they already use, including LangGraph, LlamaIndex, CrewAI, Strands Agents, and more. Today, we’re introducing new capabilities that further streamline the agent building experience, removing the infrastructure barriers that slow teams down at every stage of agent development from the first prototype through production deployment.

Go from idea to a running agent in three steps

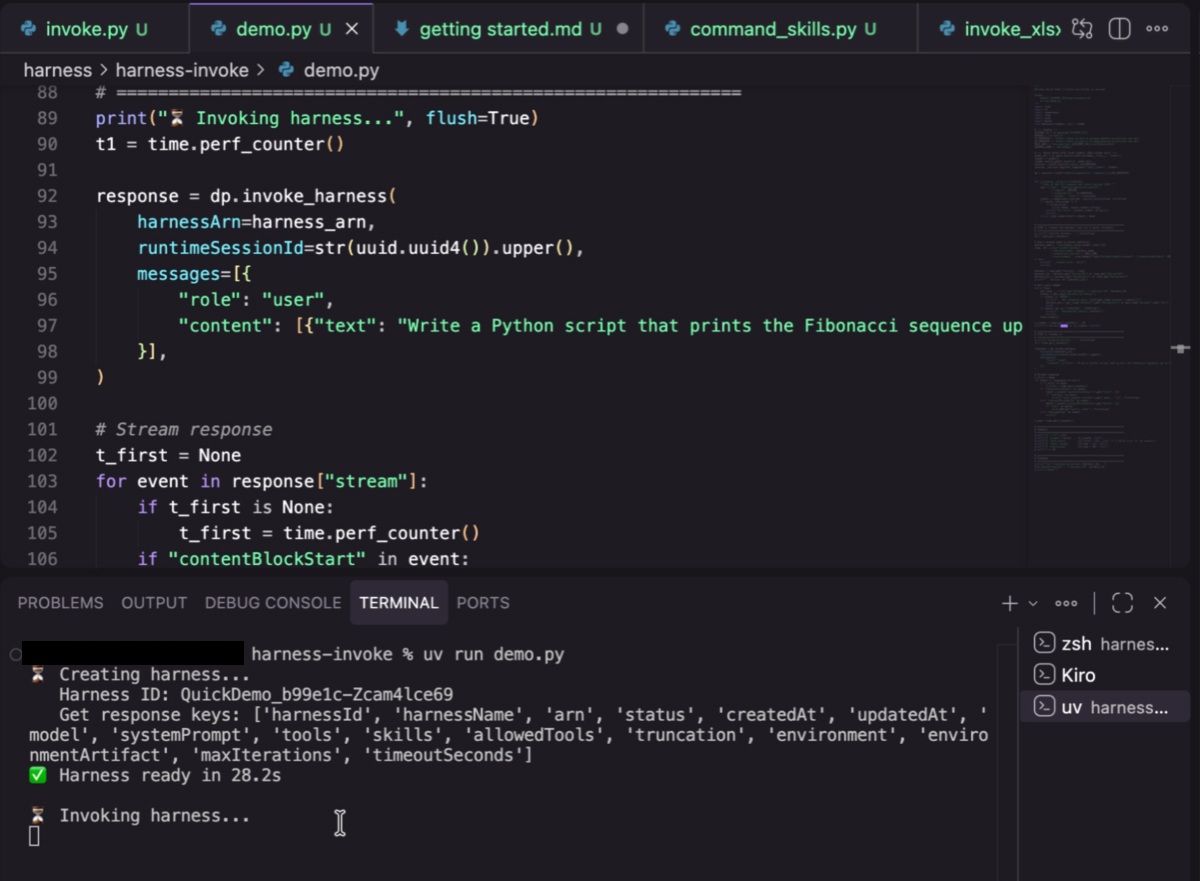

Every agent has an orchestration layer which contains the loop that calls the model, decides which tool to invoke, passes results back, manages context windows, and handles failures. Running that loop requires infrastructure underneath it: compute to host the agent, a sandbox to safely execute code, secure connections to tools, persistent storage, and error recovery. This infrastructure forms the agent harness, enabling an agent to actually run.

Until now, building that harness was the first thing every team had to do from scratch. That meant choosing a framework, writing the orchestration code, connecting it to tools and memory, and ensuring authentication, all before the agent could process a single request. It’s necessary work, but it’s not the work that tells you whether your agent is going to be useful. Most teams we’ve worked with spent days on this infrastructure before they could run their first real test.

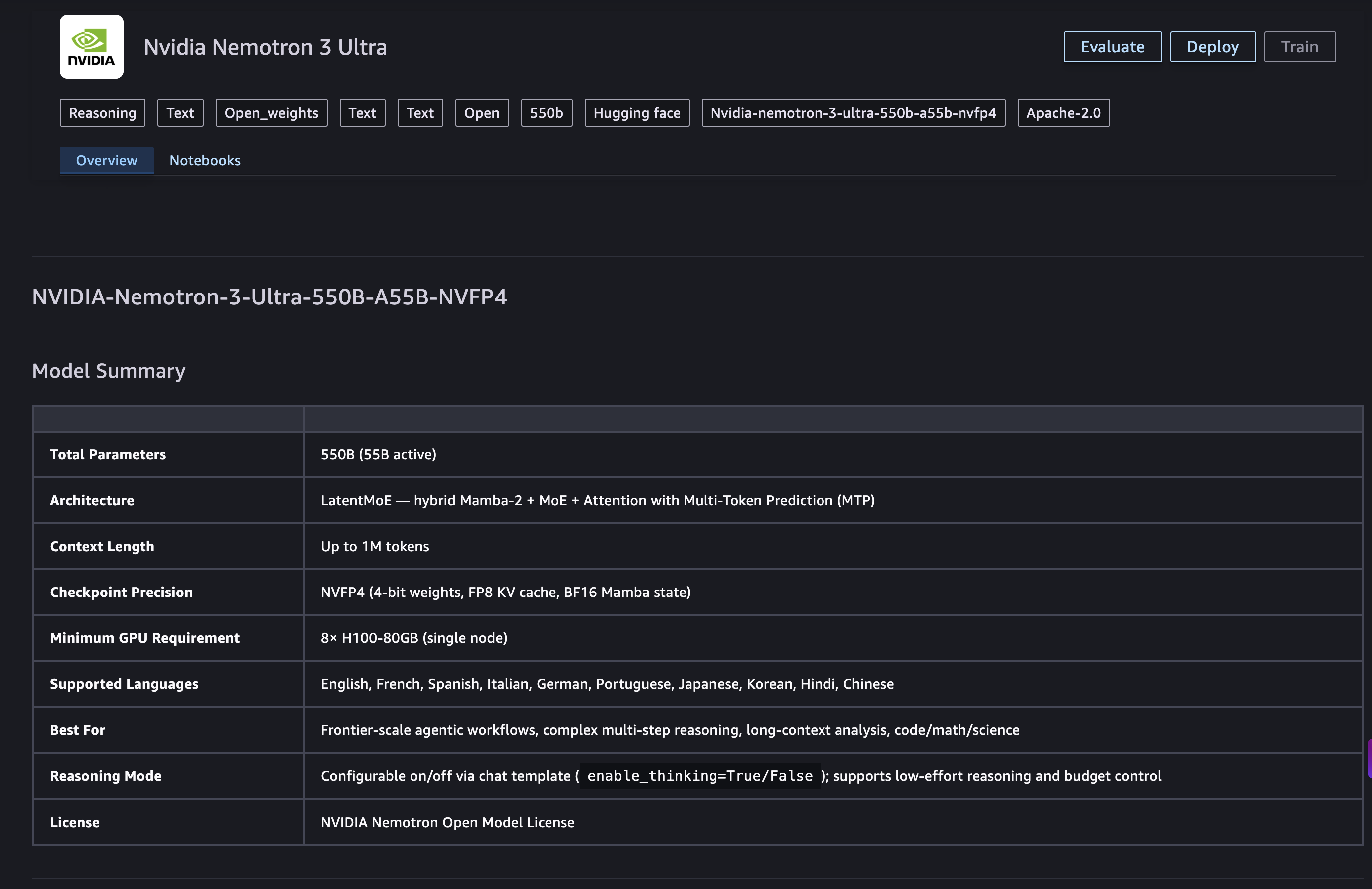

The new managed agent harness feature in AgentCore replaces all that upfront build with a straightforward configuration. You declare your agent and run it in just three API calls, without writing orchestration code. You define what your agent does: which model it uses, which tools it can call, and what instructions it follows. AgentCore’s harness stitches together compute, tooling, memory, identity, and security to create a running agent that you can test in minutes. Trying a different model or adding a tool is a config change, not a code rewrite. You can test several variations of an agent in minutes by changing the API parameter on the fly.

That speed doesn’t come at the cost of flexibility. The harness in AgentCore is powered by Strands Agents, the open source framework from AWS. When you need custom orchestration logic, specialized routing, or multi-agent coordination, you switch from config to code-defined harness, with the same platform, same microVM isolation, same deployment pipeline. AgentCore persists session state to a durable filesystem, so agents can suspend mid-task and resume exactly where they left off. This makes human-in-the-loop patterns practical without custom plumbing, and without redesigning the agent later when those needs arise. You can get started in minutes then add more capabilities and control when your needs evolve, without any rearchitecture.

“We’re building AI agents that will revolutionize ecommerce”, said Rodrigo Moreira, VP of Engineering, VTEX. “Previously, prototyping each new agent required days of orchestration code and infrastructure setup before we could validate an idea. The harness feature in AgentCore will change that: swapping a model, adding a tool, or refining an agent’s instructions is now a configuration change, not a rebuild. We can now validate agent ideas in minutes instead of days, and we’re looking forward to accelerating agent development further with these new capabilities”.

Build, deploy, operate your agents from the same terminal

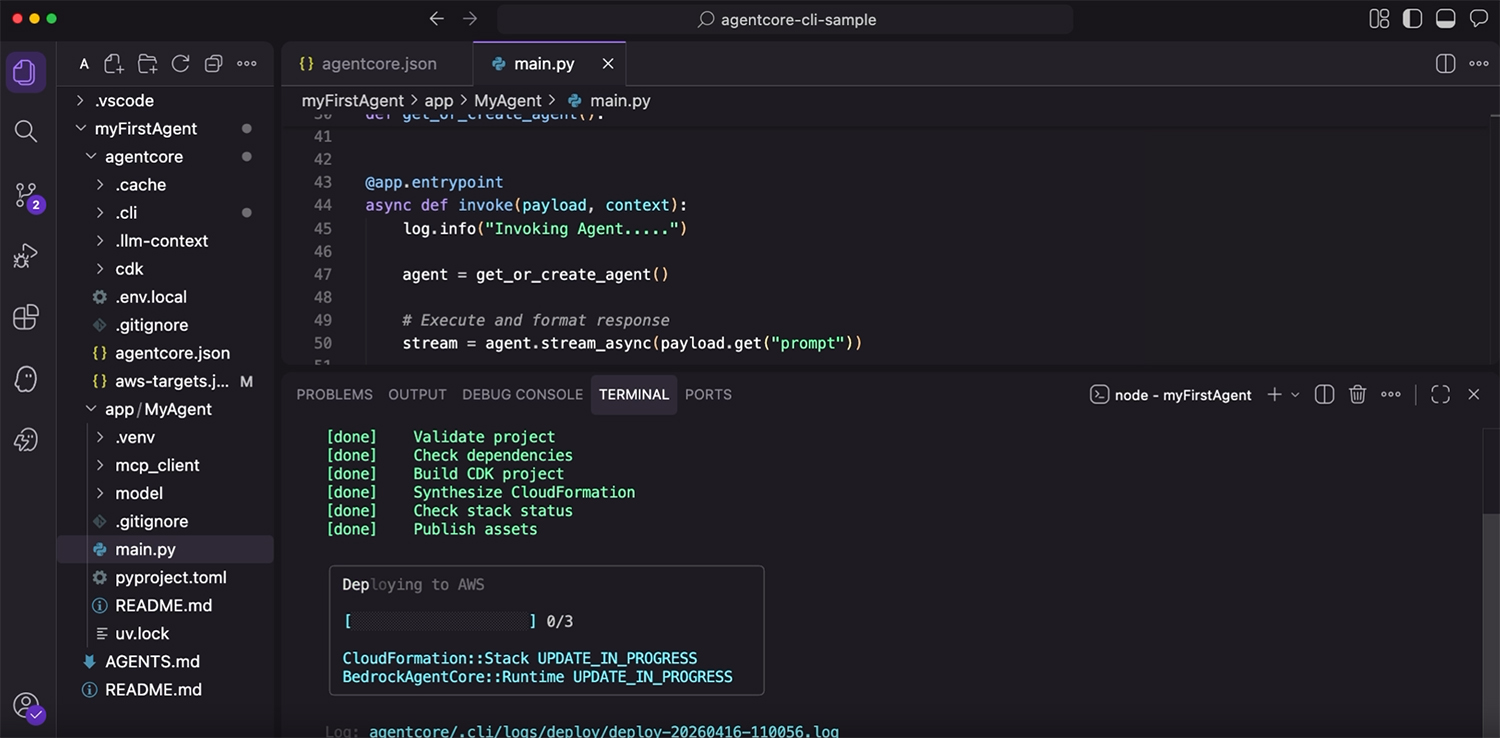

You’ve got your agent working, and now you want to run it in production. That usually means stepping out of your editor, setting up a deployment pipeline, configuring environments, and stitching together a process that looks nothing like the workflow you used to build the agent in the first place.

The new AgentCore CLI keeps you in one workflow across the full lifecycle: prototype, deploy, operate, from the same terminal that you’re already working in. You iterate on your agent locally, and when it’s ready, you deploy it without switching tools or building a separate pipeline. AgentCore powers deployments through infrastructure as code (IaC) with CDK support and Terraform (coming soon), so your agent configuration is reproducible and version-controlled. What you tested locally is exactly what runs in production.

Give your coding agents the right context

Throughout the agent development journey, most developers are working alongside a coding assistant, such as Claude Code or Kiro. But a coding assistant is only as effective as the context it has. A general-purpose MCP server can give it access to APIs and documentation, but it doesn’t encode the opinions that matter: which patterns to use, how capabilities fit together, what the recommended path looks like for common tasks. New pre-built skills in AgentCore go beyond raw API access. They give coding agents curated, current knowledge of AgentCore best practices, so the suggestions you get reflect how the platform is meant to be used, not only what endpoints exist. Kiro already includes this today as a built-in Power, with Plugins for Claude Code, Codex, and Cursor coming soon. On a platform that evolves quickly, having accurate context in your coding agent means fewer wrong turns from the very first line of code.

Get started

The managed agent harness in AgentCore is available in preview today in four AWS Regions: US West (Oregon), US East (N. Virginia), Asia Pacific (Sydney), and Europe (Frankfurt). AgentCore CLI and persistent agent filesystem, are available in all AWS commercial Regions where AgentCore is offered. Coding agent skills will be available by the end of April. You pay only for the resources that you use, with no additional charge for the CLI, harness, or skills (learn more in AgentCore pricing page). Visit AgentCore Documentation to get started.

You can use these capabilities to stay focused on agent logic, without worrying about the infrastructure setup. As your agent evolves, you add evaluations, memory, tool connections, and policy enforcement without rearchitecting. The platform that you prototype on is the same one you run in production.

About the authors