NVIDIA Nemotron 3 Ultra now available on Amazon SageMaker JumpStart

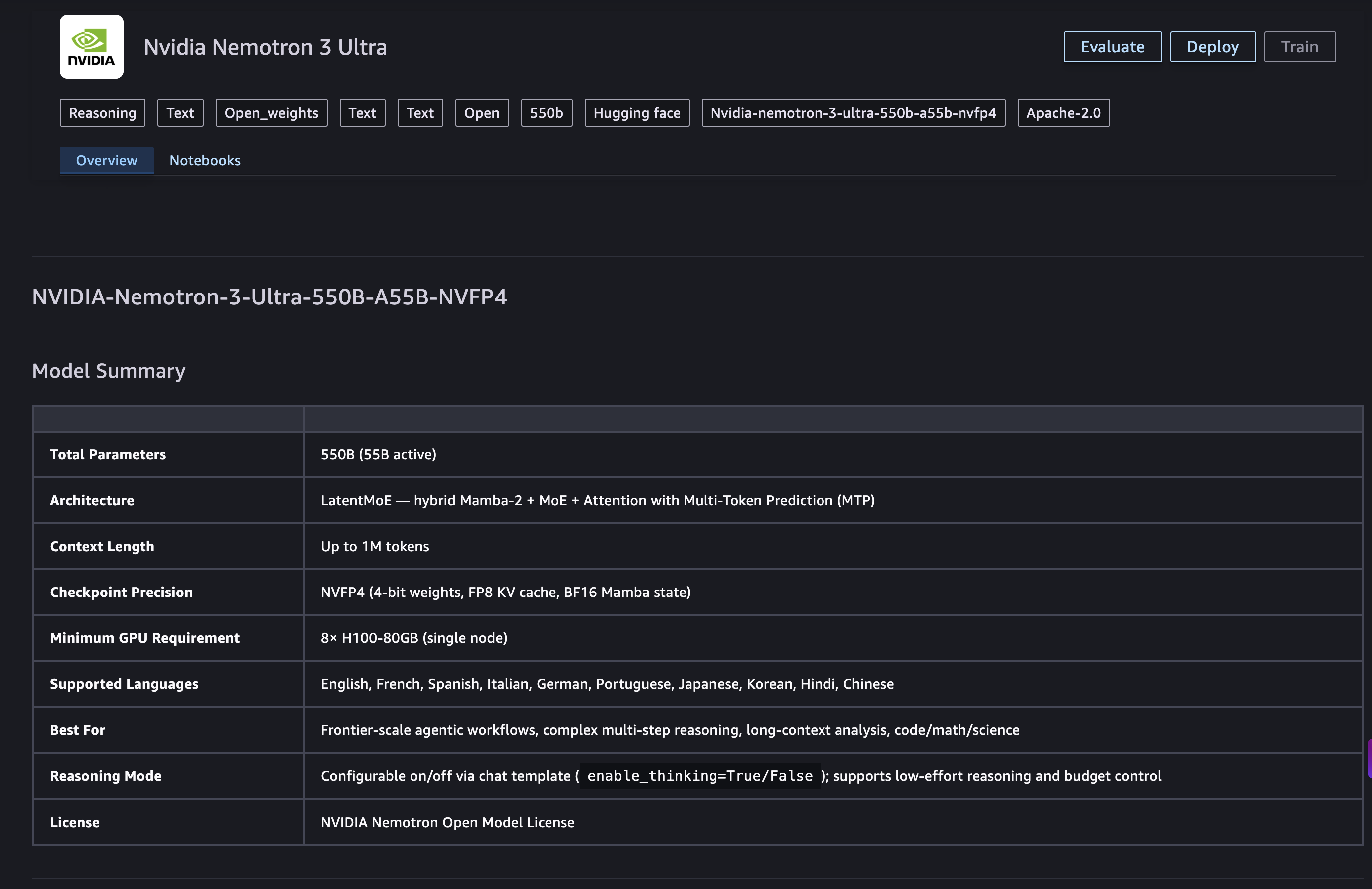

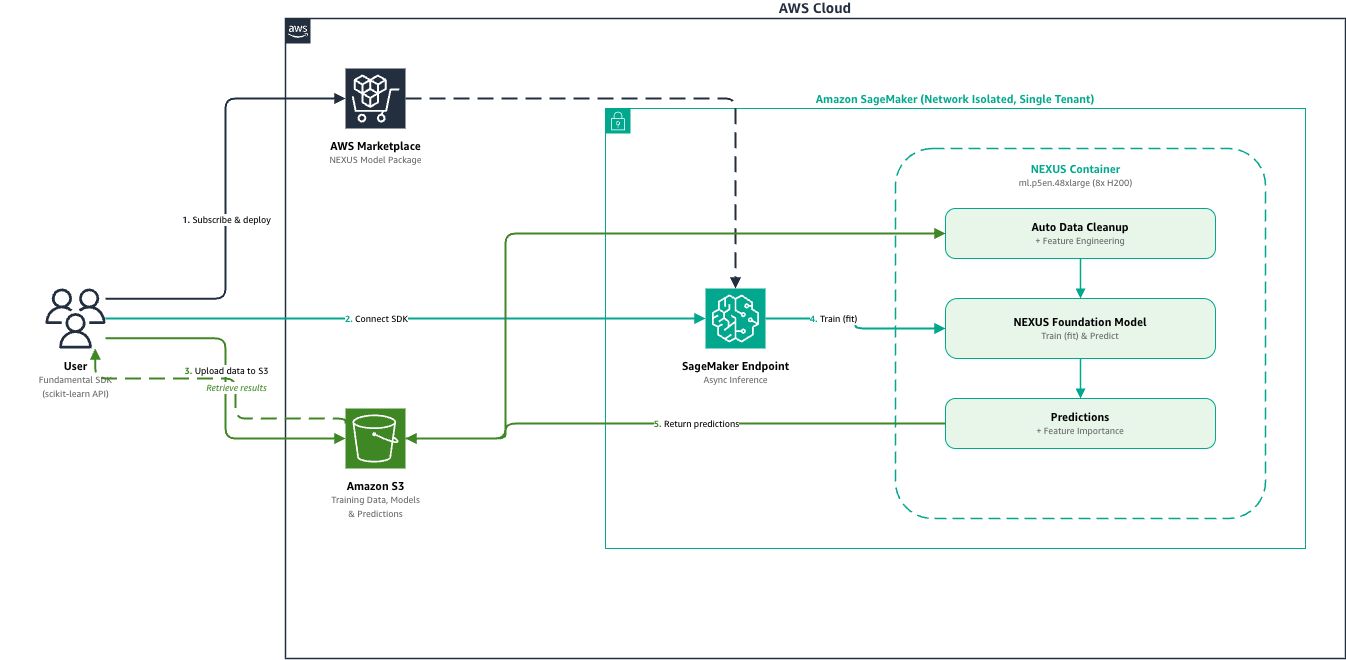

Today, we are excited to announce the day-zero availability of NVIDIA Nemotron 3 Ultra on Amazon SageMaker JumpStart. With this launch, you can now deploy the Nemotron 3 Ultra model using a one-click deployment experience. Nemotron 3 Ultra is an open model built for frontier reasoning and orchestration in long-running autonomous agents, delivering 5x faster …

NVIDIA Nemotron 3 Ultra now available on Amazon SageMaker JumpStart Read More »