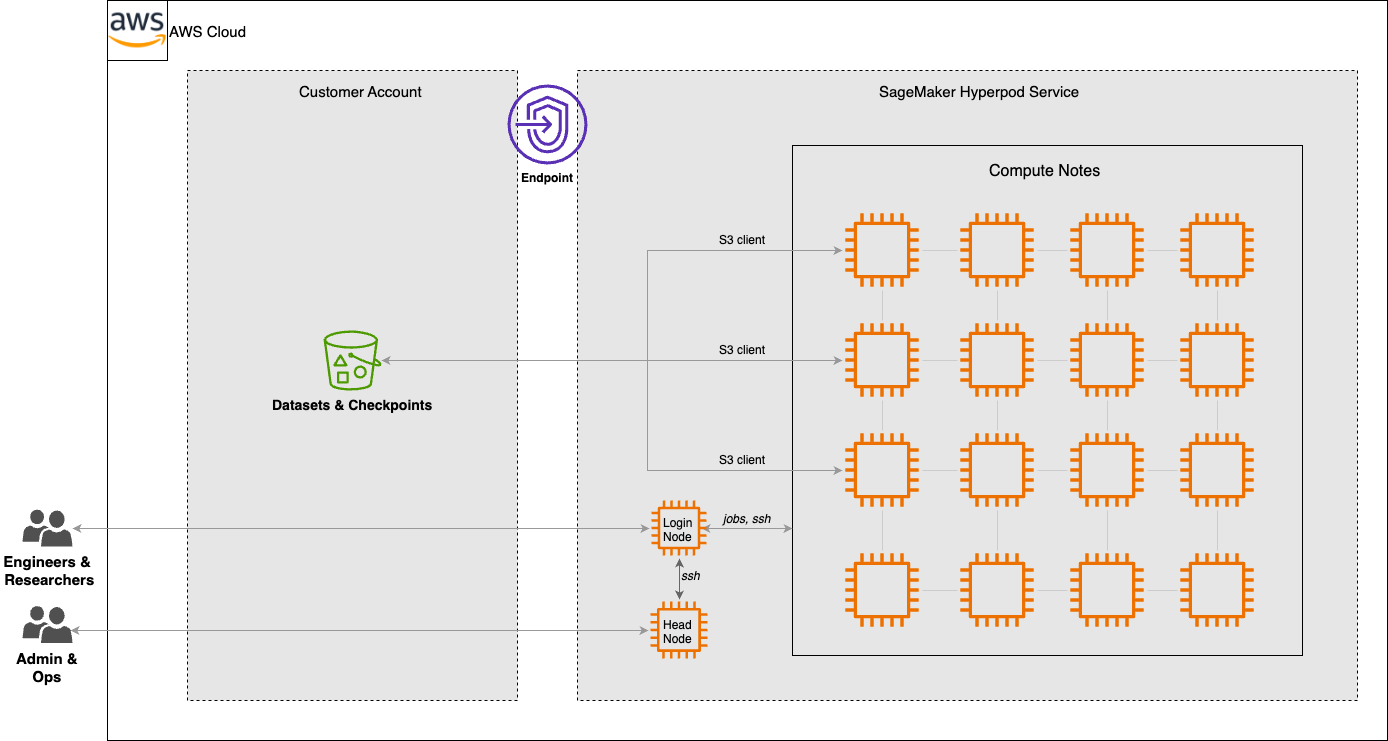

Accelerate agentic tool calling with serverless model customization in Amazon SageMaker AI

Agentic tool calling is what makes AI agents useful in production. It’s how they query databases, trigger workflows, retrieve real-time data, and act on a user’s behalf. But base models frequently hallucinate tools, pass bad parameters, and attempt actions when they should ask for clarification. These failures erode trust and block production deployment. You can …