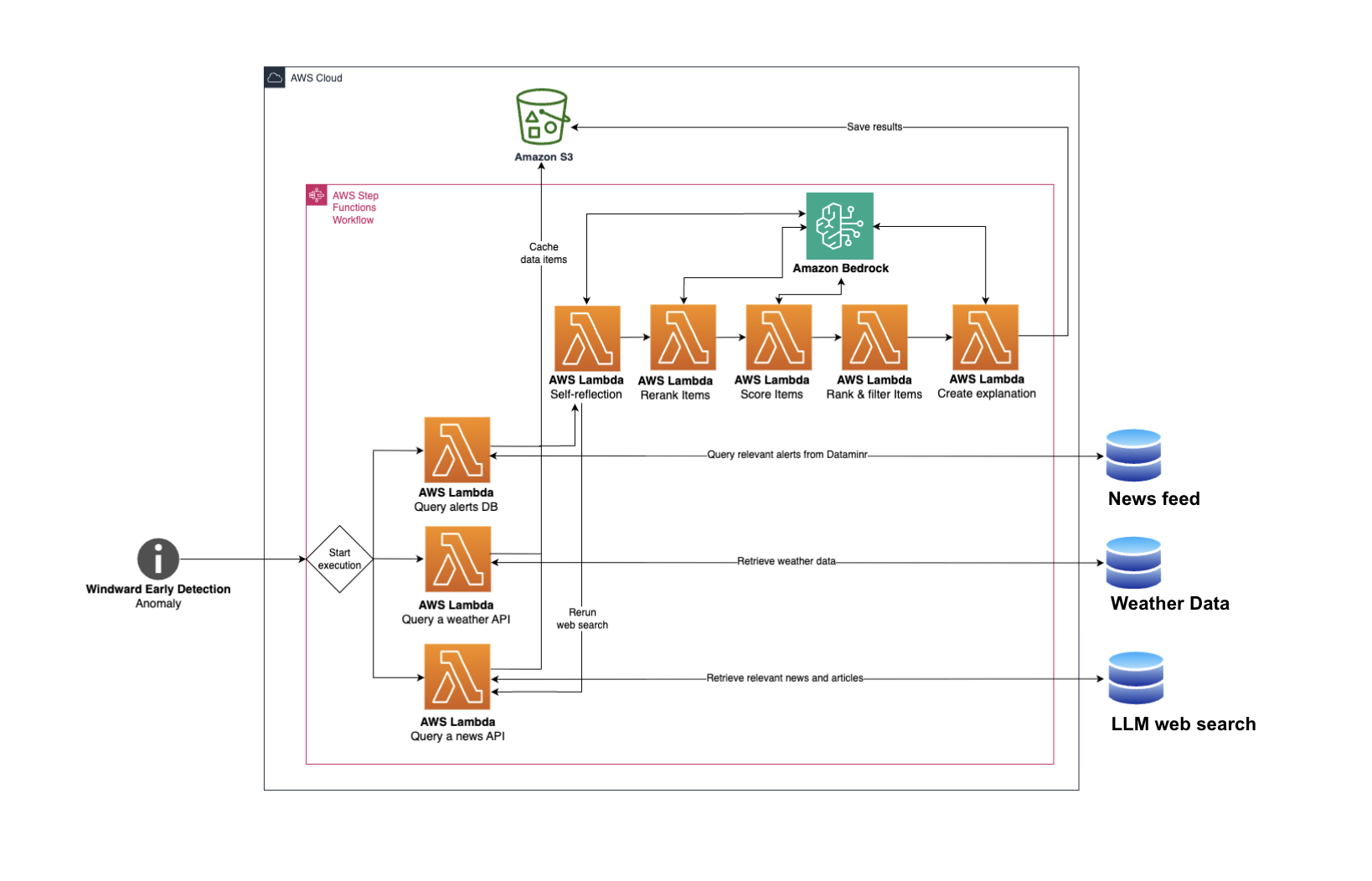

From isolated alerts to contextual intelligence: Agentic maritime anomaly analysis with generative AI

This post is co-written with Arad Ben Haim and Hannah Danan Moise from Windward. Windward is a leading Maritime AI company, delivering mission-grade, multi-source intelligence for maritime-based operations. By fusing Automatic Identification System (AIS) data, remote sensing signals, proprietary AI models, and generative AI, Windward provides a 360° view of global maritime activity so defense …