Amazon Quick: Accelerating the path from enterprise data to AI-powered decisions

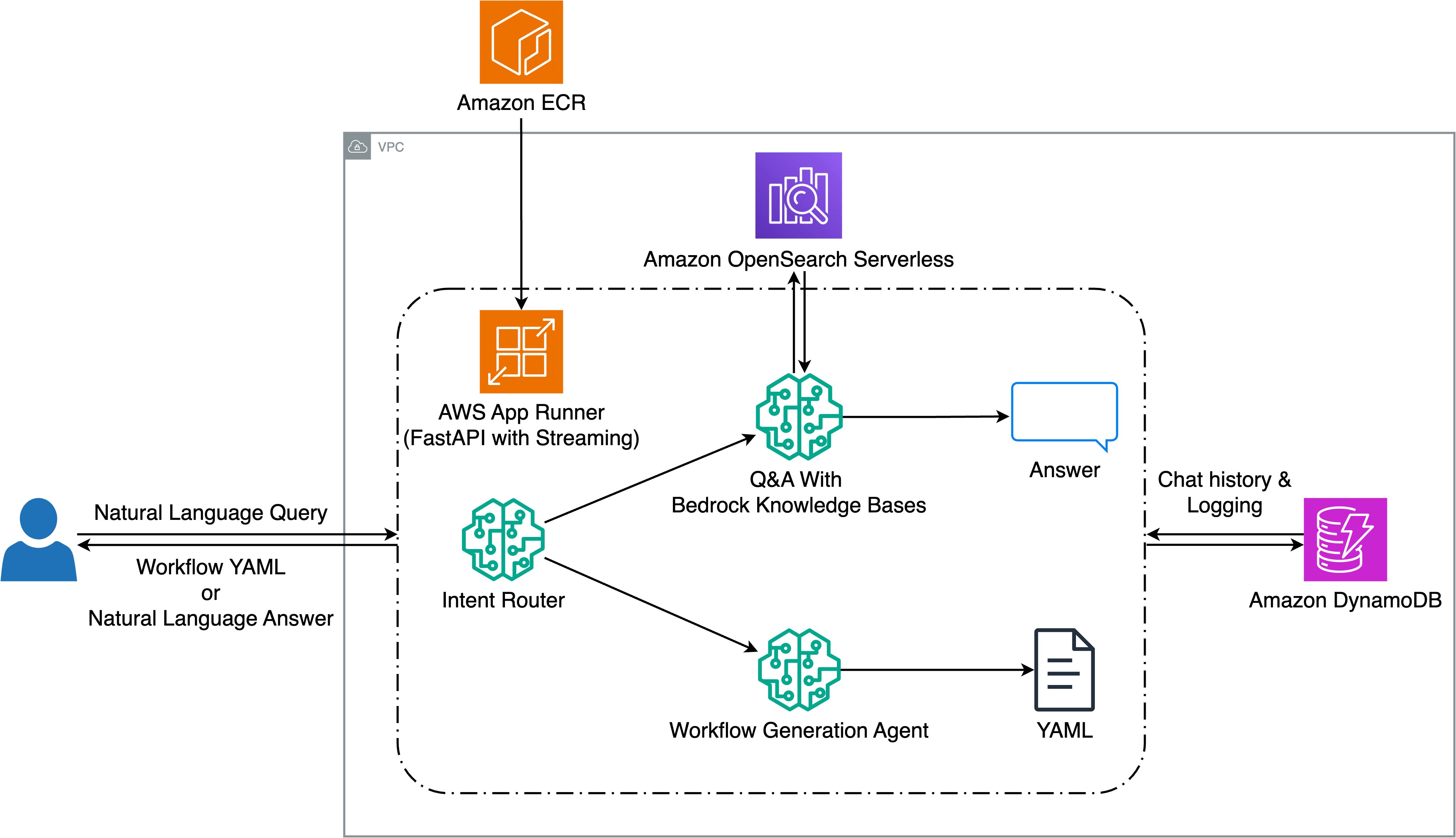

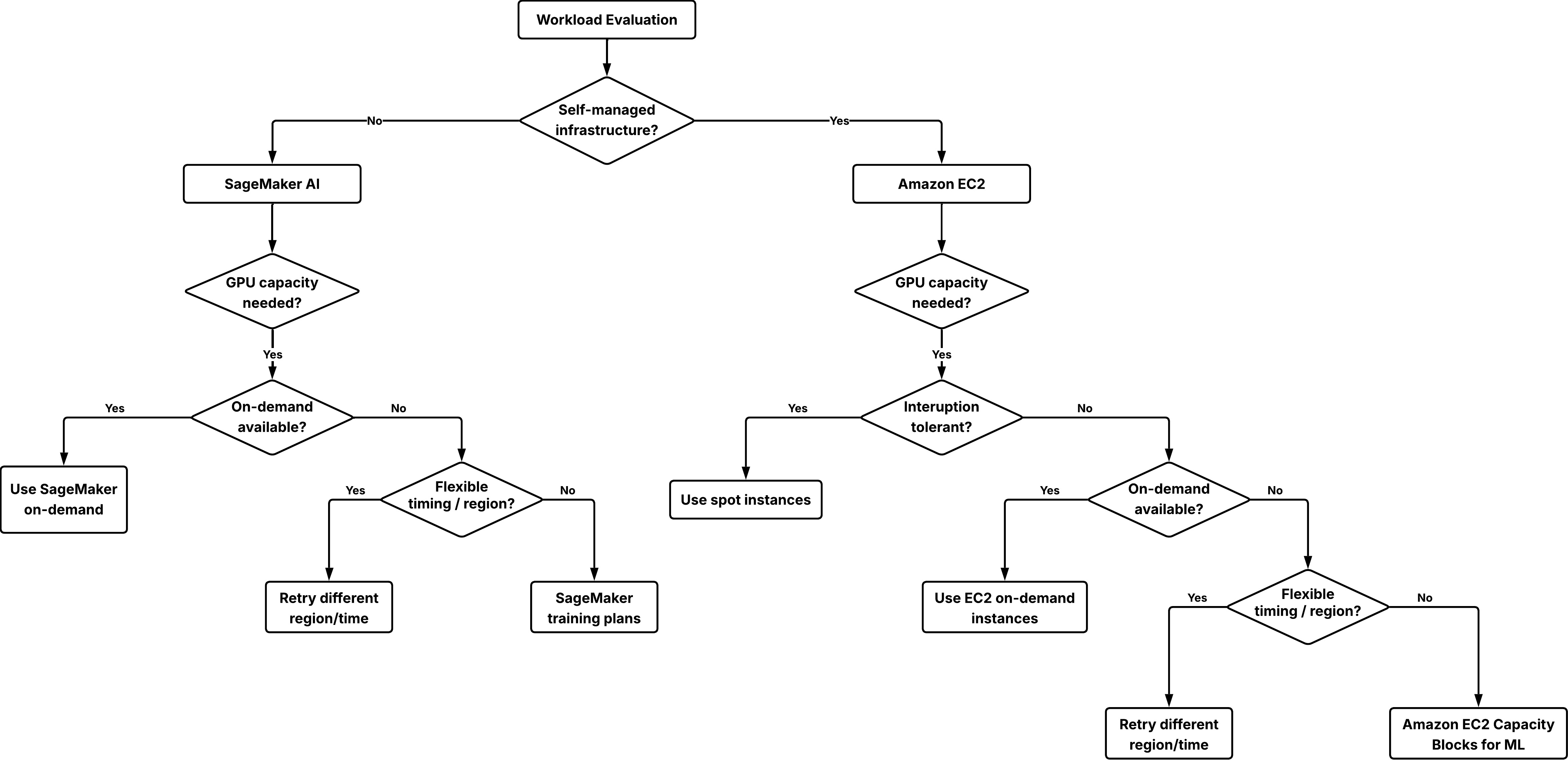

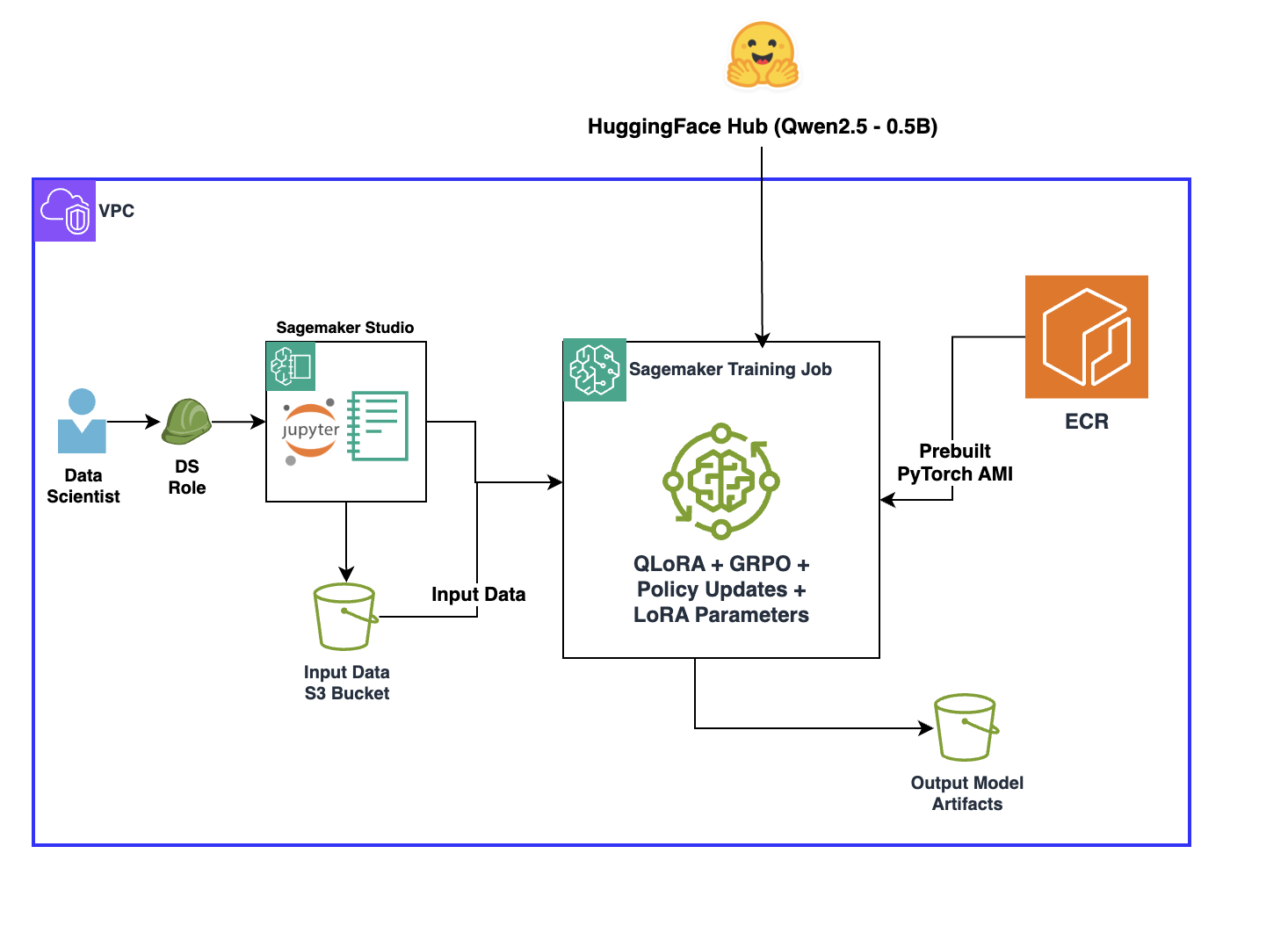

Enterprise data with tens of millions of rows, row-level and column-level security, and dozens of datasets spanning multiple business domains need AI-generated answers that are trustworthy, reproducible, and fast, while respecting governance rules consistently. With foundation models (FMs), organizations can build systems that work well for small datasets where a business user asks a question …

Amazon Quick: Accelerating the path from enterprise data to AI-powered decisions Read More »