Modern large language model (LLM) deployments face an escalating cost and performance challenge driven by token count growth. Token count, which is directly related to word count, image size, and other input factors, determines both computational requirements and costs. Longer contexts translate to higher expenses per inference request. This challenge has intensified as frontier models now support up to 10 million tokens to accommodate growing context demands from Retrieval Augmented Generation (RAG) systems and coding agents that require extensive code bases and documentation. However, industry research reveals that a significant portion of token count across inference workloads is repetitive, with the same documents and text spans appearing across numerous prompts. These data “hot spots” represent an opportunity. By caching frequently reused content, organizations can achieve cost reductions and performance improvements for their long-context inference workloads.

AWS recently released significant updates to the Large Model Inference (LMI) container, delivering comprehensive performance improvements, expanded model support, and streamlined deployment capabilities for customers hosting LLMs on AWS. These releases focus on reducing operational complexity while delivering measurable performance gains across popular model architectures.

LMCache support: transforming long-context performance

One of the most significant capabilities introduced across the newest releases of LMI is comprehensive LMCache support, which fundamentally transforms how organizations can handle long-context inference workloads. LMCache is an open source KV caching solution that extracts and stores KV caches that are generated by modern LLM engines, sharing these caches across engines and queries to help improve inference performance.

Unlike traditional prefix-only caching systems, LMCache reuses KV caches of reused text, not necessarily only prefixes, in a serving engine instance. The system operates at the chunk level, identifying commonly repeated text spans across documents or conversations and storing their precomputed KV cache. This approach enables multi-tiered storage spanning GPU memory, CPU memory, and disk/remote backends, with intelligent caching that maintains an internal index mapping token sequences to cached KV entries. The newest releases of LMI introduce automatic LMCache configuration, streamlining KV cache deployment and optimization. This low-code no-code (LCNC) interface helps customers seamlessly enable this advanced performance feature without complex manual configuration. By offloading KV cache from GPU memory to CPU RAM or NVMe storage, LMCache enables efficient handling of long-context scenarios while helping deliver latency improvements.

Comprehensive testing across various model sizes and context lengths reveals performance improvements that help transform the user experience. For workloads with repeated context, LMCache achieves faster Time to First Token (TTFT) when processing multi-million token contexts. Organizations deploying LMI can configure CPU offloading when instance RAM permits for optimal performance or use NVMe with O_DIRECT enabled for workloads requiring larger cache capacity. Implementing session-based sticky routing on Amazon SageMaker AI helps maximize cache result rates, making sure that requests from the same session consistently route to instances with relevant cached content.

LMCache performance benchmarks

Comprehensive testing across various model sizes and context lengths reveals performance improvements that improve the user experience for long-context inference workloads. The testing methodology adapted the LMCache Long Doc QA benchmark to work with the LMI container, consisting of three rounds: pre-warmup for cold-start initialization, a warmup round to populate LMCache storage, and a query round to measure performance when retrieving from cache. Benchmarks were conducted on p4de.24xlarge instances (8× A100 GPUs, 1.1TB RAM, NVMe SSD) using Qwen models with 46 documents of 10,000 tokens each (460,000 total tokens) and 4 concurrent requests.

For workloads with repeated context, LMCache achieves faster Time to First Token (TTFT) when processing multi-million token contexts. CPU offloading delivers performance improvements with 2.18x speedup in total request latency compared to baseline (52.978s → 24.274s) and 2.65x faster TTFT (1.161s → 0.438s). NVMe storage with O_DIRECT enabled approaches CPU performance (0.741s TTFT) while supporting TB-scale caching capacity, achieving 1.84x speedup in total request latency and 1.57x faster TTFT. These results demonstrate 62% TTFT reduction and 54% request latency reduction, closely aligning with published LMCache benchmarks. The variation in improvement percentages can likely be attributed to hardware and minor configuration differences. These latency reductions translate directly to cost savings, because the 54% reduction in request processing time allows the same infrastructure to handle more than twice the request volume, effectively halving per-request compute costs.

Performance characteristics vary significantly by model size due to differences in KV cache memory requirements per token. Larger models require substantially more memory per token (Qwen2.5-1.5B: 28 KB/token, Qwen2.5-7B: 56 KB/token, Qwen2.5-72B: 320 KB/token), meaning they exhaust GPU KV cache capacity at much shorter context lengths. Qwen 2.5-1.5B can store KV cache for up to 2.6M tokens in GPU memory, while Qwen 2.5-72B reaches its limit at 480K tokens. This means LMCache delivers value at shorter contexts for larger models. A 72 B model can benefit from CPU offloading starting around 500K tokens with 4-6x speedups, whereas smaller models only require offloading at extreme context lengths beyond 2.5M tokens. Organizations deploying LMI can configure CPU offloading when instance RAM permits for optimal performance or use NVMe with O_DIRECT enabled for workloads requiring larger cache capacity. Implementing session-based sticky routing on SageMaker AI helps maximize cache result rates, making sure that requests from the same session consistently route to instances with relevant cached content.

How to use LMCache

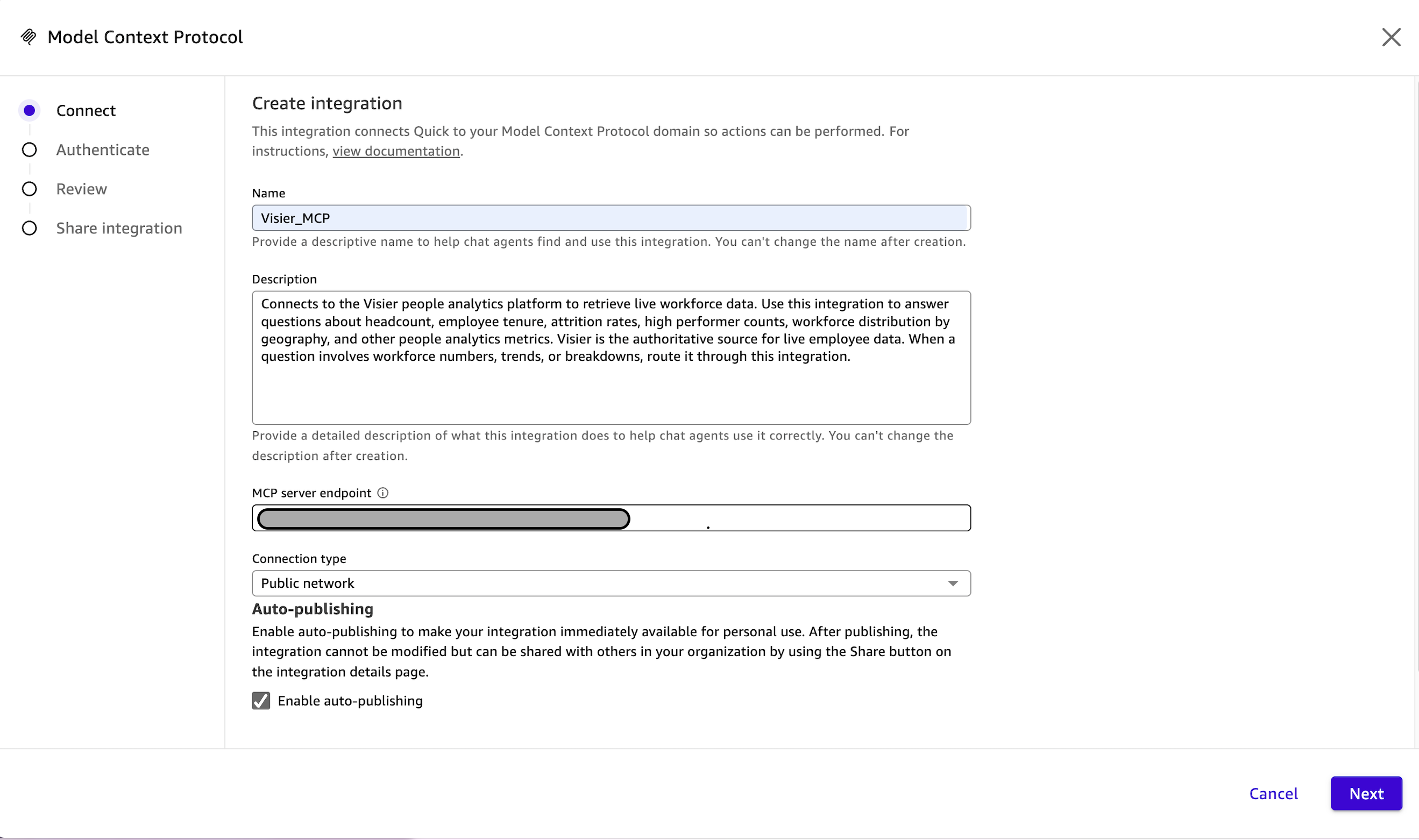

There are two main methods for configuring LMCache as defined in the GitHub documentation. The first is a manual configuration approach, and the second is an automated configuration made available in new versions of LMI.

Manual configuration

For manual configuration, customers create their own LMCache configuration and specify it in properties, files, or environment variables:

option.lmcache_config_file=/path/to/your/lmcache_config.yaml# OROPTION_LMCACHE_CONFIG_FILE=/path/to/your/lmcache_config.yaml

This approach gives customers control over LMCache settings, so that they can customize cache storage backends, chunk sizes, and other advanced parameters according to their specific requirements.

Automatic configuration

For streamlined deployments, customers can enable automatic LMCache configuration similarly:

option.lmcache_auto_config=True# OROPTION_LMCACHE_AUTO_CONFIG=True

Auto-configuration automatically generates an LMCache configuration based on available CPU/disk space on the host machine. This deployment option only supports Tensor Parallelism deployments, assumes /tmp is mounted on NVMe storage for disk-based caching, and requires maxWorkers=1. These settings are assumed with auto-configuration, which is designed for serving a single model per container instance. For serving multiple models or model copies, customers should use Amazon SageMaker AI inference components, which facilitates resource isolation between models and model copies.

The automatic configuration feature streamlines KV cache deployment by alleviating the need for manual YAML configuration files so that customers can quickly get started with LMCache optimization.

Deployment recommendations

Based on comprehensive benchmarking results and deployment experience, several recommendations emerge for optimal LMI deployment:

- Configure CPU offloading when instance RAM permits, helping deliver optimal performance for most workloads

- Use NVMe with O_DIRECT enabled for workloads requiring larger cache capacity beyond available RAM

- Implement session-based sticky routing on SageMaker AI to help maximize cache result rates and facilitate consistent performance

- Consider model architecture when configuring offloading thresholds, as models with different KV head configurations will have different optimal settings

- Use automatic LMCache configuration to streamline deployment and reduce operational complexity

Enhanced performance with EAGLE speculative decoding

The newest releases of LMI help deliver performance improvements through support for EAGLE speculative decoding techniques. Extrapolation Algorithm for Greater Language-model Efficiency (EAGLE), speeds up large language model decoding by predicting future tokens directly from the hidden layers of the model. This approach generates draft tokens that the primary model validates in parallel, helping reduce overall generation latency while maintaining output quality.

Configuring EAGLE speculative decoding is straightforward, requiring only specification of the draft model path and number of speculative tokens in your deployment configuration. This enables organizations to achieve better performance for LLM hosting workloads with benefits for high-concurrency production deployments and reasoning-focused models.

Expanded model support and multimodal capabilities

The newest releases of LMI help deliver comprehensive support for cutting-edge open source models, including DeepSeek v3.2, Mistral Large 3, Ministral 3, and the Qwen3-VL series. Performance optimizations help improve both throughput and Time to First Token (TTFT) for large-scale model serving across these architectures. Expanded multimodal capabilities include FlashAttention ViT support, now serving as the default backend for vision-language models. EAGLE speculative decoding improvements bring multi-step CUDA graph support and multimodal support with Qwen3-VL, enabling faster inference for vision-language workloads. With these enhancements, organizations can deploy and scale foundation models (FMs) faster and more efficiently, which helps to reduce time-to-production while lowering operational complexity.

LoRA adapter hosting improvements

The newest releases of LMI bring notable enhancements to hosting multiple LoRA adapters on SageMaker AI. LoRA adapters are now “lazy” loaded—when creating an inference component, the adapter’s component becomes available almost immediately, but actual loading of adapter weights and registering with the inference engine happens on the first invocation. This approach helps reduce deployment time while maintaining flexibility for multi-tenant scenarios.

Custom input and output preprocessing scripts are now supported for both base models and adapters, with each inference component hosting LoRA adapters able to have different scripts. This enables adapter-specific formatting logic without modifying core inference code, supporting multi-tenant deployments where different adapters apply distinct formatting rules to the same underlying model.

Custom output formatters provide a flexible mechanism for transforming model responses before they are returned to clients so that organizations can standardize output formats, add custom metadata, or implement adapter-specific formatting logic. These formatters can be defined at the base model level to apply to the responses by default, or at the adapter level to override base model behavior for LoRA adapters. Common use cases include adding processing timestamps and custom metadata, transforming generated text with prefixes or formatting, calculating and injecting custom metrics, implementing adapter-specific output schemas for different client applications, and standardizing response formats across heterogeneous model deployments.

Get started today

The newest releases of LMI represent significant steps forward in large model inference capabilities. Organizations can deploy cutting-edge LLMs with greater performance and flexibility with the following:

- comprehensive LMCache support across the releases

- EAGLE speculative decoding for accelerated inference

- expanded model support including cutting-edge multimodal capabilities

- enhanced LoRA adapter hosting

The container’s configurable options provide the flexibility to fine-tune deployments for specific needs, whether optimizing for latency, throughput, or cost. With the comprehensive system capabilities of Amazon SageMaker AI, you can focus on delivering AI-powered solutions that help drive business value rather than managing infrastructure.

Explore these capabilities today when deploying your generative AI models on AWS and leverage the performance improvements and streamlined deployment experience to help accelerate your production workloads.

About the authors