Visit Opera browser website for full experience

Remarks

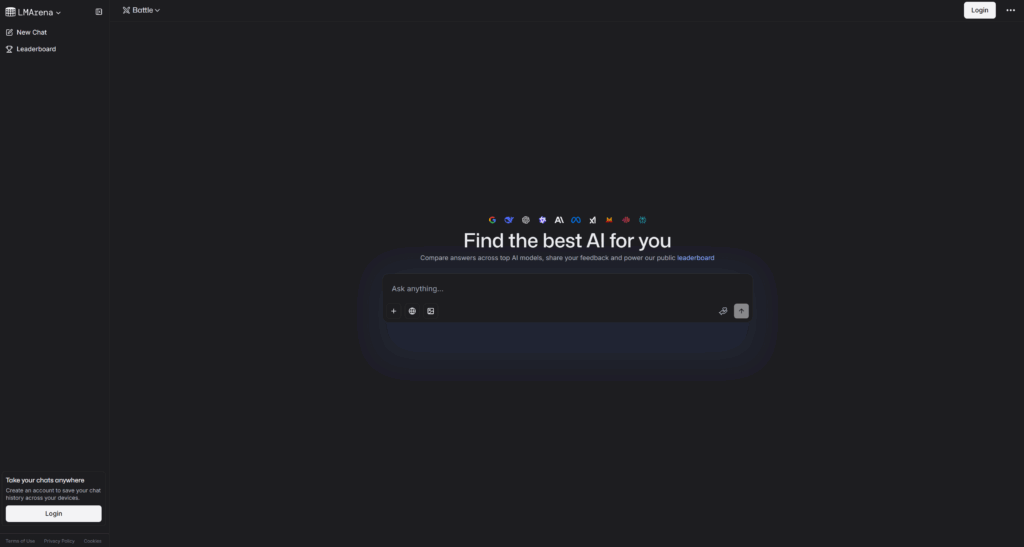

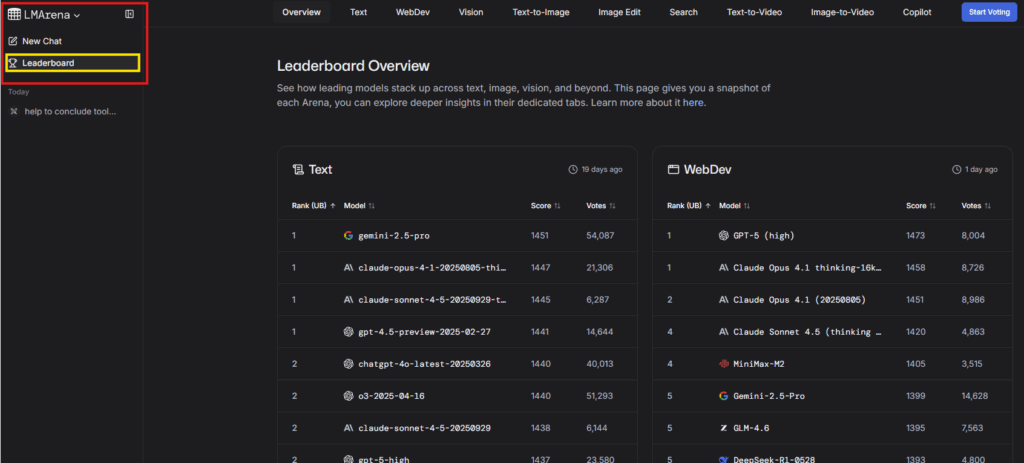

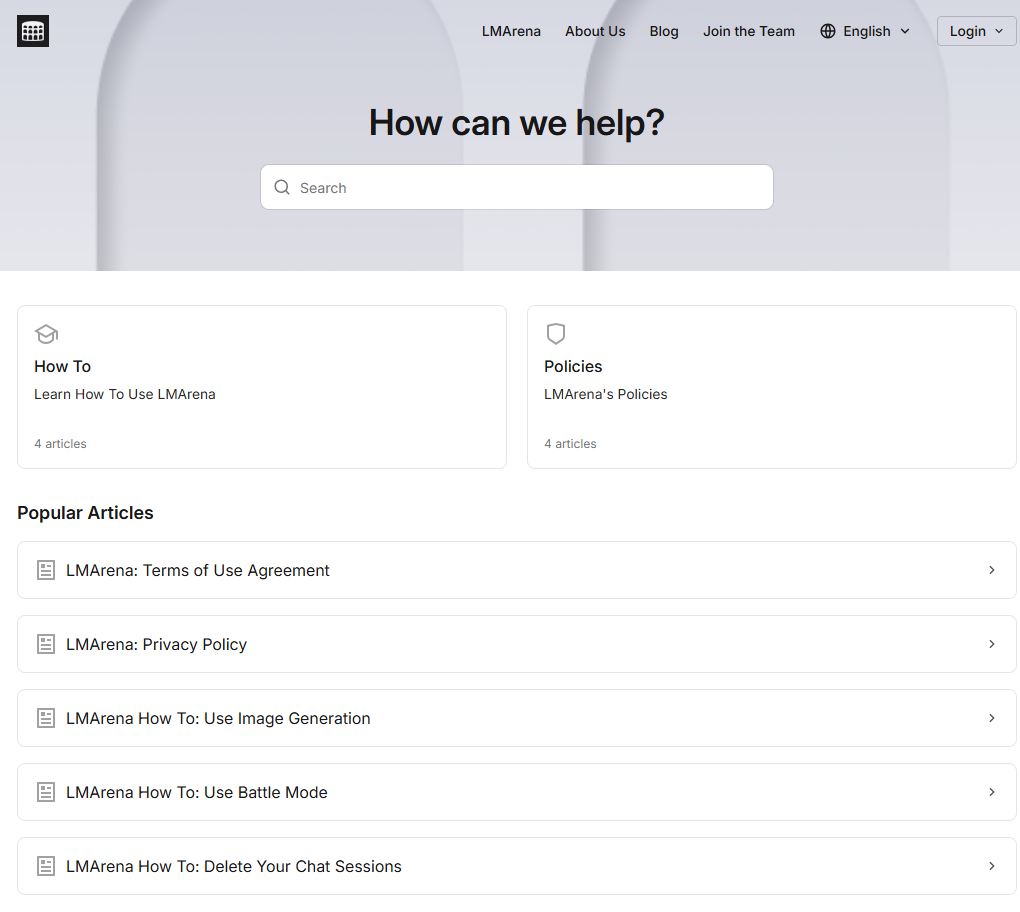

LMArena is a public, web-based platform that evaluates Large Language Models (LLMs) and other AI models through anonymous, crowd-sourced pairwise comparisons. Created by researchers from UC Berkeley and the LMSYS Org, it serves as a transparent and community-driven battleground for the world’s leading AI models.

LMArena’s core function is to provide a real-world, human-preference-based ranking of AI models, which complements traditional, static technical benchmarks.

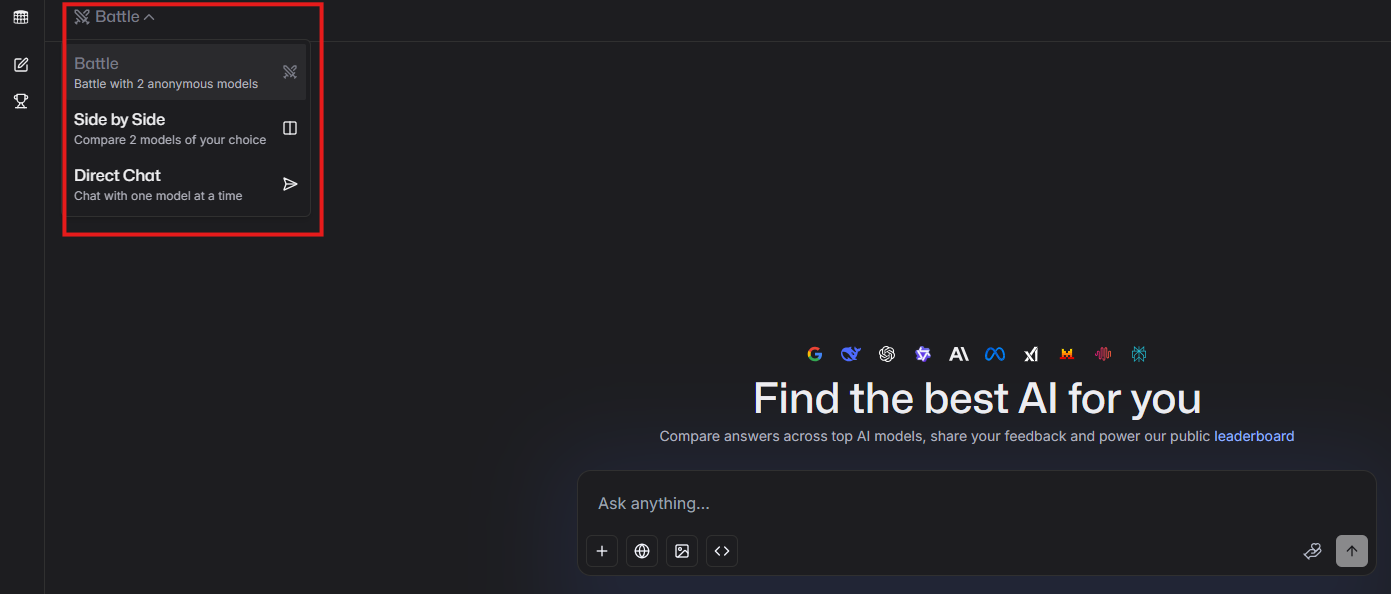

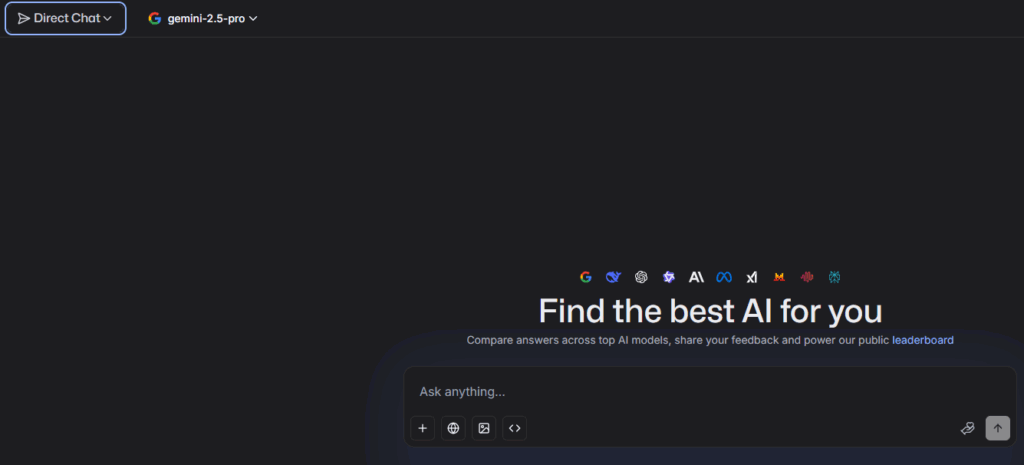

- Primary Use: Crowd-sourced AI Evaluation: Users submit a prompt, and two anonymous AI models respond. The user then votes for the “better” response, feeding into a dynamic ranking system.

- Ranking System: Elo Rating System: Similar to competitive chess, models gain or lose points based on wins and losses in these duels, resulting in the public Leaderboard.

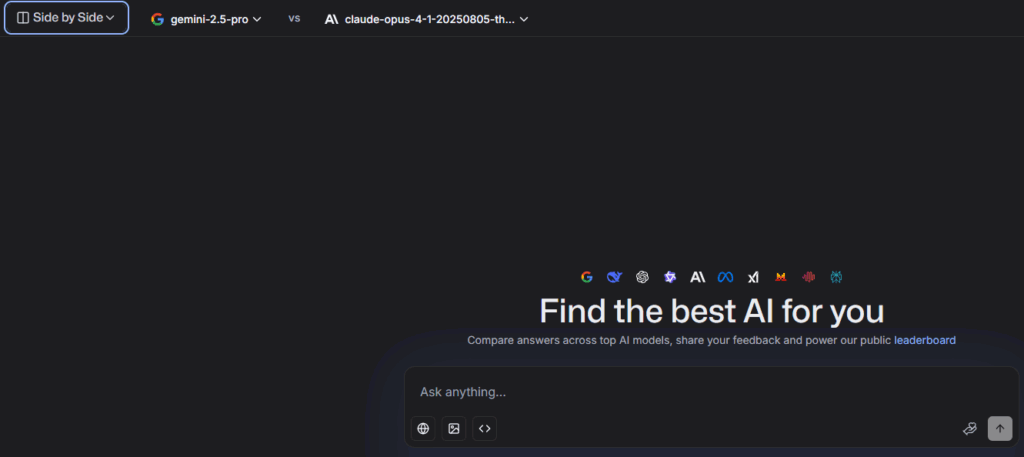

- Model Comparison: It allows for side-by-side comparison of numerous models, including closed-source giants (like GPT, Gemini, Claude) and popular open-source models (like LLaMA and Mistral).

- Arenas: Evaluation spans various modalities, including Text, Image Generation, Vision, and Text-to-Video.

Limitations:

While highly valuable for its real-world data, LMArena faces several limitations and areas of debate:

- Subjectivity of Votes: The rankings reflect human preference, which can be subjective, fickle, and vary by culture or prompt type, rather than absolute, objective capability.

- Sampling Bias: Models featured more frequently in battles naturally accrue more data updates and visibility, potentially skewing the leaderboard.

- Strategic Optimization: Some research suggests that labs might optimize model versions specifically to “ace” the types of prompts often seen on LMArena (known as “bench-maxing”), which can inflate a model’s score relative to its general-purpose performance.

- Model Availability: Certain models may require a sign-in or may not always be available for testing due to rate limits or other constraints.

- Context Scarcity: The Elo score can feel abstract, as it indicates relative performance based on user votes without providing the full context of why one model was preferred over another for specific tasks.

Visit Deepseek website for full experience

Remarks

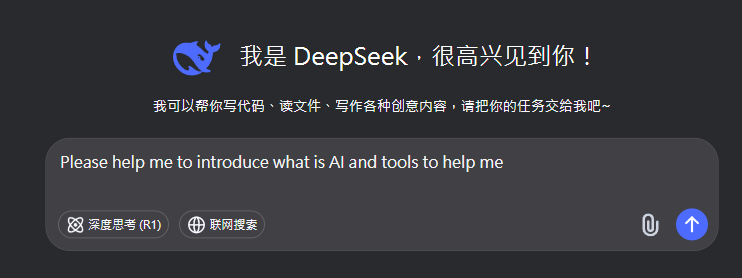

DeepSeek is an AI-powered tool designed for deep information retrieval, analysis, and content generation. It is commonly used in areas such as:

- Advanced Information Retrieval

- DeepSeek can process and analyze large datasets to extract relevant insights.

- It helps users find precise information beyond standard search engines.

- Natural Language Processing (NLP) Applications

- Used for text summarization, sentiment analysis, and question-answering systems.

- Supports various languages and can generate human-like responses.

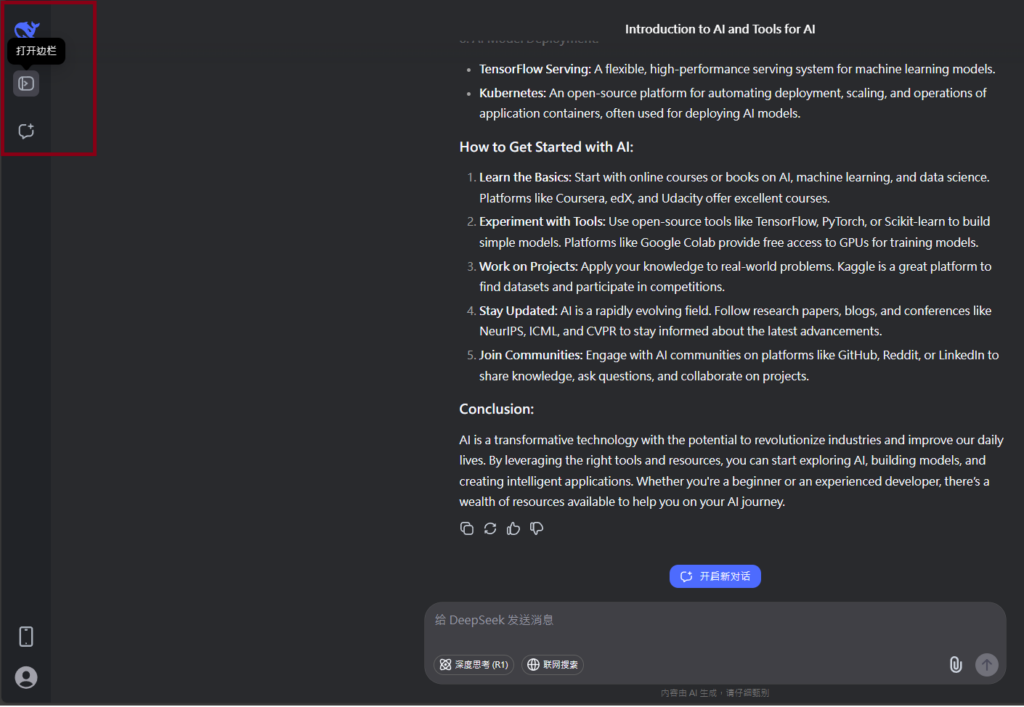

- AI-Assisted Research and Writing

- Helps researchers analyze academic papers, generate summaries, and suggest references.

- Useful for drafting articles, reports, and creative writing.

- Code Assistance and Debugging

- Provides AI-powered code suggestions, optimizations, and bug fixes.

- Supports multiple programming languages, aiding developers in software development.

- Business and Decision-Making Support

- Analyzes market trends, customer feedback, and financial data for businesses.

- Assists in generating insights for strategic decision-making.

limitation:

- Accuracy and Hallucination Issues

- AI models can sometimes generate incorrect or misleading information.

- Requires human verification before relying on outputs.

- Limited Real-Time Data Access

- May not always provide the latest information if it’s not connected to live data sources.

- Some AI models work with pre-trained datasets, limiting real-time updates.

- Context Limitations

- Struggles with highly nuanced or ambiguous queries.

- Long conversations may lead to context loss or inconsistencies.

- Ethical and Bias Concerns

- AI models can reflect biases present in training data.

- Requires careful consideration when used in sensitive applications.

- Computational Resource Constraints

- Running deep learning models requires significant computational power.

- Latency issues may arise during complex queries or large-scale data analysis.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Ut elit tellus, luctus nec ullamcorper mattis, pulvinar dapibus leo.