AWS offers powerful generative AI services, including Amazon Bedrock, which allows organizations to create tailored use cases such as AI chat-based assistants that give answers based on knowledge contained in the customers’ documents, and much more. Many businesses want to integrate these cutting-edge AI capabilities with their existing collaboration tools, such as Google Chat, to enhance productivity and decision-making processes.

This post shows how you can implement an AI-powered business assistant, such as a custom Google Chat app, using the power of Amazon Bedrock. The solution integrates large language models (LLMs) with your organization’s data and provides an intelligent chat assistant that understands conversation context and provides relevant, interactive responses directly within the Google Chat interface.

This solution showcases how to bridge the gap between Google Workspace and AWS services, offering a practical approach to enhancing employee efficiency through conversational AI. By implementing this architectural pattern, organizations that use Google Workspace can empower their workforce to access groundbreaking AI solutions powered by Amazon Web Services (AWS) and make informed decisions without leaving their collaboration tool.

With this solution, you can interact directly with the chat assistant powered by AWS from your Google Chat environment, as shown in the following example.

Solution overview

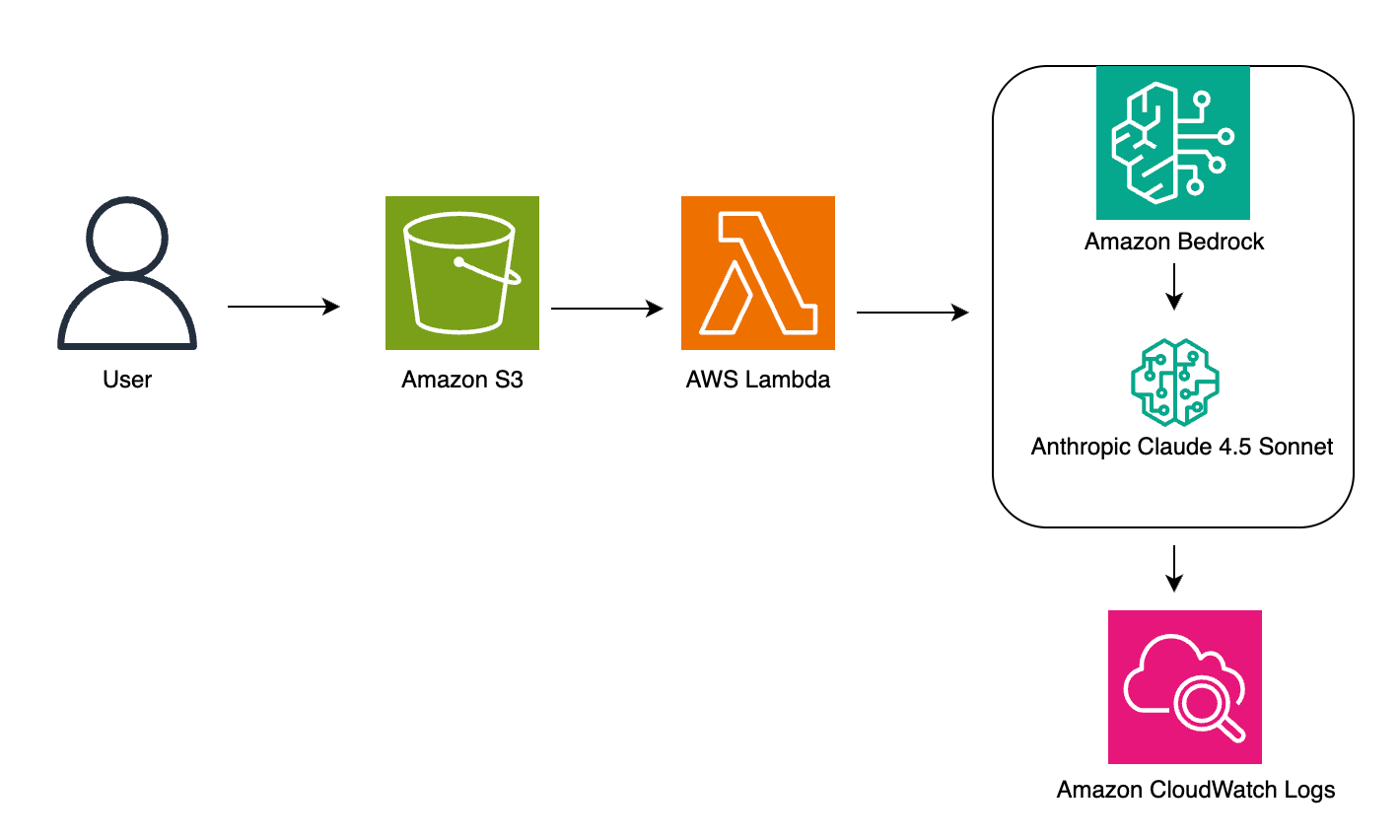

We use the following key services to build this intelligent chat assistant:

Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading AI companies such as AI21 Labs, Anthropic, Cohere, Meta, Stability AI, and Amazon through a single API, along with a broad set of capabilities to build generative AI applications with security, privacy, and responsible AI

AWS Lambda, a serverless computing service, lets you handle the application logic, processing requests, and interaction with Amazon Bedrock

Amazon DynamoDB lets you store session memory data to maintain context across conversations

Amazon API Gateway lets you create a secure API endpoint for the custom Google Chat app to communicate with our AWS based solution.

The following figure illustrates the high-level design of the solution.

The workflow includes the following steps:

The process begins when a user sends a message through Google Chat, either in a direct message or in a chat space where the application is installed.

The custom Google Chat app, configured for HTTP integration, sends an HTTP request to an API Gateway endpoint. This request contains the user’s message and relevant metadata.

Before processing the request, a Lambda authorizer function associated with the API Gateway authenticates the incoming message. This verifies that only legitimate requests from the custom Google Chat app are processed.

After it’s authenticated, the request is forwarded to another Lambda function that contains our core application logic. This function is responsible for interpreting the user’s request and formulating an appropriate response.

The Lambda function interacts with Amazon Bedrock through its runtime APIs, using either the RetrieveAndGenerate API that connects to a knowledge base, or the Converse API to chat directly with an LLM available on Amazon Bedrock. This also allows the Lambda function to search through the organization’s knowledge base and generate an intelligent, context-aware response using the power of LLMs. The Lambda function also uses a DynamoDB table to keep track of the conversation history, either directly with a user or within a Google Chat space.

After receiving the generated response from Amazon Bedrock, the Lambda function sends this answer back through API Gateway to the Google Chat app.

Finally, the AI-generated response appears in the user’s Google Chat interface, providing the answer to their question.

This architecture allows for a seamless integration between Google Workspace and AWS services, creating an AI-driven assistant that enhances information accessibility within the familiar Google Chat environment. You can customize this architecture to connect other solutions that you develop in AWS to Google Chat.

In the following sections, we explain how to deploy this architecture.

Prerequisites

To implement the solution outlined in this post, you must have the following:

A Linux or MacOS development environment with at least 20 GB of free disk space. It can be a local machine or a cloud instance. If you use an AWS Cloud9 instance, make sure you have increased the disk size to 20 GB.

The AWS Command Line Interface (AWS CLI) installed on your development environment. This tool allows you to interact with AWS services through command line commands.

An AWS account and an AWS Identity and Access Management (IAM) principal with sufficient permissions to create and manage the resources needed for this application. If you don’t have an AWS account, refer to How do I create and activate a new Amazon Web Services account? To configure the AWS CLI with the associated credentials, typically, you set up an AWS access key ID and secret access key for a designated IAM user with appropriate permissions.

Request access to Amazon Bedrock FMs. In this post, we use either Anthropic’s Claude Sonnet 3 or Amazon Titan Text G1 Premier available in Amazon Bedrock, but you can also choose other models that are supported for Amazon Bedrock knowledge bases.

Optionally, an Amazon Bedrock knowledge base created in your account, which allows you to integrate your own documents into your generative AI applications. If you don’t have an existing knowledge base, refer to Create an Amazon Bedrock knowledge base. Alternatively, the solution proposes an option without a knowledge base, with answers generated only by the FM on the backend.

A Business or Enterprise Google Workspace account with access to Google Chat. You also need a Google Cloud project with billing enabled. To check that an existing project has billing enabled, see Verify the billing status of your projects.

Docker installed on your development environment.

Deploy the solution

The application presented in this post is available in the accompanying GitHub repository and provided as an AWS Cloud Development Kit (AWS CDK) project. Complete the following steps to deploy the AWS CDK project in your AWS account:

Clone the GitHub repository on your local machine.

Install the Python package dependencies that are needed to build and deploy the project. This project is set up like a standard Python project. We recommend that you create a virtual environment within this project, stored under the .venv. To manually create a virtual environment on MacOS and Linux, use the following command:

After the initialization process is complete and the virtual environment is created, you can use the following command to activate your virtual environment:

Install the Python package dependencies that are needed to build and deploy the project. In the root directory, run the following command:

Run the cdk bootstrap command to prepare an AWS environment for deploying the AWS CDK application.

Run the script init-script.bash:

This script prompts you for the following:

The Amazon Bedrock knowledge base ID to associate with your Google Chat app (refer to the prerequisites section). Keep this blank if you decide not to use an existing knowledge base.

Which LLM you want to use in Amazon Bedrock for text generation. For this solution, you can choose between Anthropic’s Claude Sonnet 3 or Amazon Titan Text G1 – Premier

The following screenshot shows the input variables to the init-script.bash script.

The script deploys the AWS CDK project in your account. After it runs successfully, it outputs the parameter ApiEndpoint, whose value designates the invoke URL for the HTTP API endpoint deployed as part of this project. Note the value of this parameter because you use it later in the Google Chat app configuration.

The following screenshot shows the output of the init-script.bash script.

You can also find this parameter on the AWS CloudFormation console, on the stack’s Outputs tab.

Register a new app in Google Chat

To integrate the AWS powered chat assistant into Google Chat, you create a custom Google Chat app. Google Chat apps are extensions that bring external services and resources directly into the Google Chat environment. These apps can participate in direct messages, group conversations, or dedicated chat spaces, allowing users to access information and take actions without leaving their chat interface.

For our AI-powered business assistant, we create an interactive custom Google Chat app that uses the HTTP integration method. This approach allows our app to receive and respond to user messages in real time, providing a seamless conversational experience.

After you have deployed the AWS CDK stack in the previous section, complete the following steps to register a Google Chat app in the Google Cloud portal:

Open the Google Cloud portal and log in with your Google account.

Search for “Google Chat API” and navigate to the Google Chat API page, which lets you build Google Chat apps to integrate your services with Google Chat.

If this is your first time using the Google Chat API, choose ACTIVATE. Otherwise, choose MANAGE.

On the Configuration tab, under Application info, provide the following information, as shown in the following screenshot:

For App name, enter an app name (for example, bedrock-chat).

For Avatar URL, enter the URL for your app’s avatar image. As a default, you can provide the Google chat product icon.

For Description, enter a description of the app (for example, Chat App with Amazon Bedrock).

Under Interactive features, turn on Enable Interactive features.

Under Functionality, select Receive 1:1 messages and Join spaces and group conversations, as shown in the following screenshot.

Under Connection settings, provide the following information:

Select App URL.

For App URL, enter the Invoke URL associated with the deployment stage of the HTTP API gateway. This is the ApiEndpoint parameter that you noted at the end of the deployment of the AWS CDK template.

For Authentication Audience, select App URL, as shown in the following screenshot.

Under Visibility, select Make this Chat app available to specific people and groups in <your-company-name> and provide email addresses for individuals and groups who will be authorized to use your app. You need to add at least your own email if you want to access the app.

Choose Save.

The following animation illustrates these steps on the Google Cloud console.

By completing these steps, the new Amazon Bedrock chat app should be accessible on the Google Chat console for the persons or groups that you authorized in your Google Workspace.

To dispatch interaction events to the solution deployed in this post, Google Chat sends requests to your API Gateway endpoint. To verify the authenticity of these requests, Google Chat includes a bearer token in the Authorization header of every HTTPS request to your endpoint. The Lambda authorizer function provided with this solution verifies that the bearer token was issued by Google Chat and targeted at your specific app using the Google OAuth client library. You can further customize the Lambda authorizer function to implement additional control rules based on User or Space objects included in the request from Google Chat to your API Gateway endpoint. This allows you to fine-tune access control, for example, by restricting certain features to specific users or limiting the app’s functionality in particular chat spaces, enhancing security and customization options for your organization.

Converse with your custom Google Chat app

You can now converse with the new app within your Google Chat interface. Connect to Google Chat with an email that you authorized during the configuration of your app and initiate a conversation by finding the app:

Choose New chat in the chat pane, then enter the name of the application (bedrock-chat) in the search field.

Choose Chat and enter a natural language phrase to interact with the application.

Although we previously demonstrated a usage scenario that involves a direct chat with the Amazon Bedrock application, you can also invoke the application from within a Google chat space, as illustrated in the following demo.

Customize the solution

In this post, we used Amazon Bedrock to power the chat-based assistant. However, you can customize the solution to use a variety of AWS services and create a solution that fits your specific business needs.

To customize the application, complete the following steps:

Edit the file lambda/lambda-chat-app/lambda-chatapp-code.py in the GitHub repository you cloned to your local machine during deployment.

Implement your business logic in this file.

The code runs in a Lambda function. Each time a request is processed, Lambda runs the lambda_handler function:

When Google Chat sends a request, the lambda_handler function calls the handle_post function.

Let’s replace the handle_post function with the following code:

Save your file, then run the following command in your terminal to deploy your new code:

The deployment should take about a minute. When it’s complete, you can go to Google Chat and test your new business logic. The following screenshot shows an example chat.

As the image shows, your function gets the user message and a space name. You can use this space name as a unique ID for the conversation, which lets you to manage history.

As you become more familiar with the solution, you may want to explore advanced Amazon Bedrock features to significantly expand its capabilities and make it more robust and versatile. Consider integrating Amazon Bedrock Guardrails to implement safeguards customized to your application requirements and responsible AI policies. Consider also expanding the assistant’s capabilities through function calling, to perform actions on behalf of users, such as scheduling meetings or initiating workflows. You could also use Amazon Bedrock Prompt Flows to accelerate the creation, testing, and deployment of workflows through an intuitive visual builder. For more advanced interactions, you could explore implementing Amazon Bedrock Agents capable of reasoning about complex problems, making decisions, and executing multistep tasks autonomously.

Performance optimization

The serverless architecture used in this post provides a scalable solution out of the box. As your user base grows or if you have specific performance requirements, there are several ways to further optimize performance. You can implement API caching to speed up repeated requests or use provisioned concurrency for Lambda functions to eliminate cold starts. To overcome API Gateway timeout limitations in scenarios requiring longer processing times, you can increase the integration timeout on API Gateway, or you might replace it with an Application Load Balancer, which allows for extended connection durations. You can also fine-tune your choice of Amazon Bedrock model to balance accuracy and speed. Finally, Provisioned Throughput in Amazon Bedrock lets you provision a higher level of throughput for a model at a fixed cost.

Clean up

In this post, you deployed a solution that lets you interact directly with a chat assistant powered by AWS from your Google Chat environment. The architecture incurs usage cost for several AWS services. First, you will be charged for model inference and for the vector databases you use with Amazon Bedrock Knowledge Bases. AWS Lambda costs are based on the number of requests and compute time, and Amazon DynamoDB charges depend on read/write capacity units and storage used. Additionally, Amazon API Gateway incurs charges based on the number of API calls and data transfer. For more details about pricing, refer to Amazon Bedrock pricing.

There might also be costs associated with using Google services. For detailed information about potential charges related to Google Chat, refer to the Google Chat product documentation.

To avoid unnecessary costs, clean up the resources created in your AWS environment when you’re finished exploring this solution. Use the cdk destroy command to delete the AWS CDK stack previously deployed in this post. Alternatively, open the AWS CloudFormation console and delete the stack you deployed.

Conclusion

In this post, we demonstrated a practical solution for creating an AI-powered business assistant for Google Chat. This solution seamlessly integrates Google Workspace with AWS hosted data by using LLMs on Amazon Bedrock, Lambda for application logic, DynamoDB for session management, and API Gateway for secure communication. By implementing this solution, organizations can provide their workforce with a streamlined way to access AI-driven insights and knowledge bases directly within their familiar Google Chat interface, enabling natural language interaction and data-driven discussions without the need to switch between different applications or platforms.

Furthermore, we showcased how to customize the application to implement tailored business logic that can use other AWS services. This flexibility empowers you to tailor the assistant’s capabilities to their specific requirements, providing a seamless integration with your existing AWS infrastructure and data sources.

AWS offers a comprehensive suite of cutting-edge AI services to meet your organization’s unique needs, including Amazon Bedrock and Amazon Q. Now that you know how to integrate AWS services with Google Chat, you can explore their capabilities and build awesome applications!

About the Authors

Nizar Kheir is a Senior Solutions Architect at AWS with more than 15 years of experience spanning various industry segments. He currently works with public sector customers in France and across EMEA to help them modernize their IT infrastructure and foster innovation by harnessing the power of the AWS Cloud.

Lior Perez is a Principal Solutions Architect on the construction team based in Toulouse, France. He enjoys supporting customers in their digital transformation journey, using big data, machine learning, and generative AI to help solve their business challenges. He is also personally passionate about robotics and Internet of Things (IoT), and he constantly looks for new ways to use technologies for innovation.